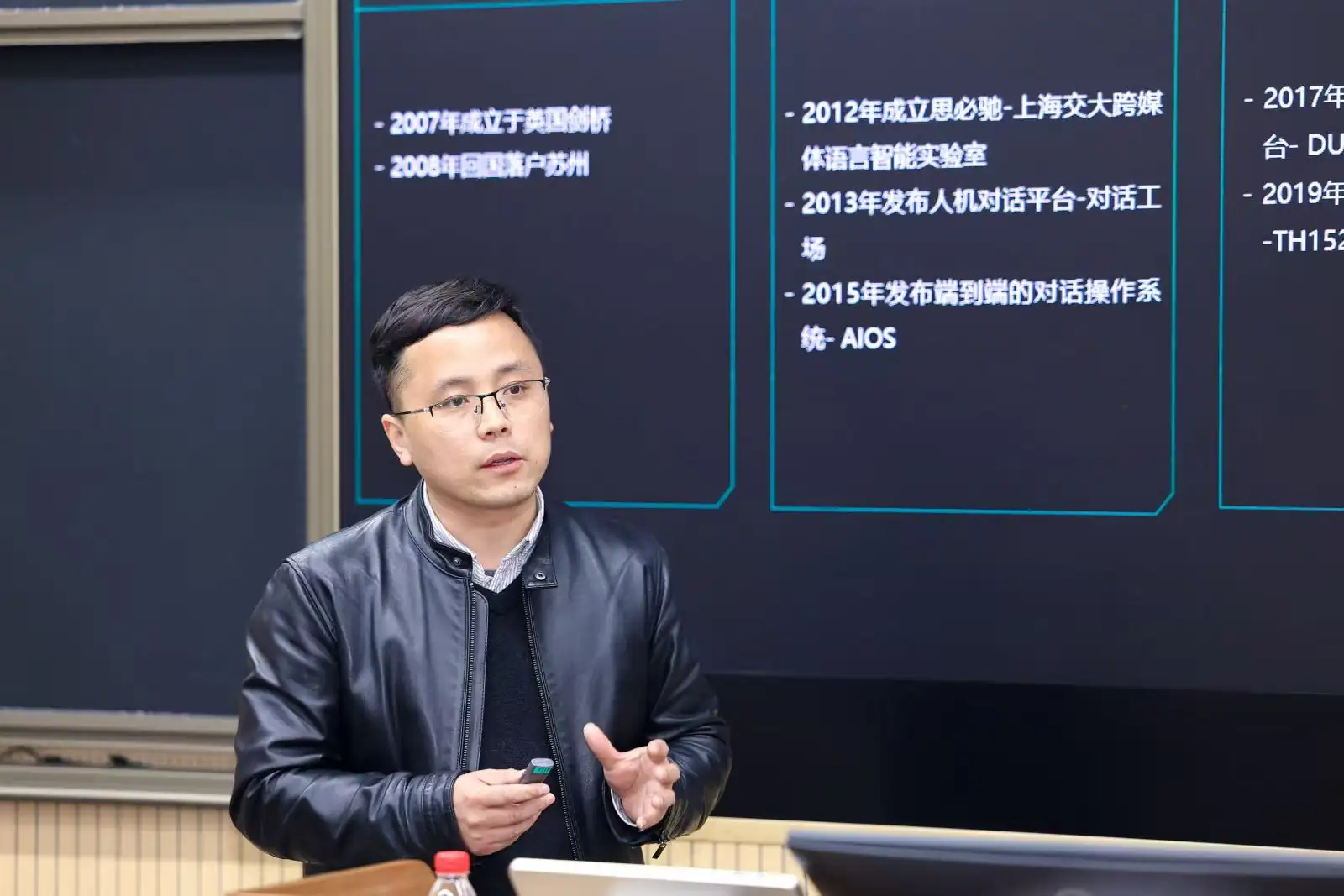

In this article, we explore how AI is redefining spatial hearing cognition. Bringing fresh insights to the discussion is Yongbo Chen, Product Director at AI Speech Co., Ltd.

At the intersection of acoustic engineering and information science, a quiet revolution is reshaping the way humans perceive and interact with sound. While conventional audio processing technologies remain constrained by the immutable laws of physics, artificial intelligence has stepped beyond the limits of basic signal processing. Empowered by human-like high-order cognitive intelligence, AI breaks through technical bottlenecks, turning acoustic environments into computable, interpretable, and customizable intelligent fields. This shift is not merely an upgrade of audio tools—it is a fundamental redefinition of spatial hearing cognition, led by innovations that bridge technical performance and human-centric experience.

Cognitive Leap: From Sound Wave Processing to Semantic Field Construction

Traditional audio systems suffer from a critical paradox: they process sound waves efficiently but fail to understand the meaning behind sound. Adaptive filtering can suppress noise in targeted frequency bands, yet it cannot tell apart a casual keyboard tap from an important alert, beamforming technology enables directional sound pickup, but it cannot grasp the emotional tone or intent of a speaker. Trapped in ‘processing without cognition’, these systems have long been limited to the role of passive auxiliary tools.

The breakthrough of deep learning AI models lies in building an organic connection between acoustic features and semantic understanding through autonomous learning capabilities. By modeling temporal acoustic features with the Transformer architecture, next-generation systems can separate mixed sound sources, construct a comprehensive sound semantic map, accurately identify speaker emotions, and even perceive the distribution of audience attention in a space. The integration of AISpeech’s DFM-2 language model with proprietary audio algorithms marks a pivotal paradigm shift—moving audio processing from the single goal of ‘hearing clearly’ to the advanced capability of ‘understanding precisely’.

Spatial Intelligence: The Sound Field as a Cognitive Interface

True acoustic intelligence is defined by a system’s ability to perceive and adapt to its surrounding environment. Modern audio systems are evolving into independent cognitive entities with spatial awareness. Through multi-modal sensor fusion, including acoustic arrays and environmental sensors, these systems generate real-time 3D acoustic maps, and interpret the spatial attributes and semantic value of every acoustic event occurring within the space.

This cognitive capacity has unlocked a wave of practical innovations across industries. In smart classrooms, the system eliminates background noise while recognizing teachers’ behavioral patterns, automatically adjusting sound pickup and amplification to match teaching scenarios. In corporate meeting rooms, it senses the natural rhythm of speaker turn-taking, intelligently arbitrating pickup priorities for multiple participants to ensure smooth, uninterrupted communication. No longer just sound processors, these systems act as intelligent coordinators of spatial acoustic experiences.

Invisible Intelligence: The Philosophical Realization of Technology Invisibility

The essence of a ‘seamless user experience’ is that technology deeply understands and adapts to human behavioral habits, requiring predictive intelligence that anticipates user needs before they arise. AI-powered sound field management systems continuously learn spatial acoustic characteristics and usage patterns, enabling them to predict shifts in meeting formats—from free-flowing discussion to formal thematic presentations—and complete sound field reconfiguration in hundreds of milliseconds in advance.

These systems also grasp the physical essence of “natural human communication.” Using generative adversarial networks to simulate ideal acoustic environments, they eliminate unwanted reverberation and echo while shaping sound field properties that align with innate human auditory preferences. When a speaker moves around a meeting room, the system does not simply track the sound source,instead, it maintains acoustic perspective, ensuring the sound image listeners perceive matches the speaker’s visual position. This design aligns with the neurological foundations of natural face-to-face communication, making technology feel invisible rather than intrusive.

Acoustic Architecture for Mixed Reality

In the post-pandemic era, hybrid collaboration has become the new norm, erasing the acoustic boundary between physical and digital spaces. Intelligent audio systems have emerged as the foundational infrastructure for building an ‘acoustic metaverse’ enabling transparent cross-space acoustic transmission and coordinated acoustic management across physical and virtual environments.

A dynamic sound field optimization algorithm based on reinforcement learning balances local sound amplification and remote audio transmission demands, reserving optimal acoustic parameters for remote encoding and decoding to preserve sound clarity. Cutting-edge research is now focused on spatial acoustic digital twins, which allow remote participants to not only hear speakers clearly but also perceive their exact position and the acoustic properties of the physical room. This achieves true cross-space acoustic cognitive alignment, making remote collaboration as immersive and natural as in-person interaction.

Ethical Intelligence: Privacy and Equity in Acoustic Implementation

As acoustic systems grow more intelligent, ethical design has moved from an afterthought to a core pillar of technical architecture. Protecting user privacy and ensuring acoustic equity are no longer optional—they are essential to responsible innovation.

Differential privacy technology is embedded into the acoustic feature extraction process, ensuring the system can understand semantic content without exposing sensitive conversation details. The federated learning framework enables the system to learn universal acoustic patterns from multi-scenario data without centralizing raw audio files, eliminating data privacy risks associated with centralized storage.

In education, this ethical design translates to tangible social value. Intelligent sound field systems monitor the uniformity of acoustic coverage in classrooms, automatically adjusting amplification strategies to ensure students in the back rows enjoy the same auditory clarity as those in the front. This uses technology to advance educational equity, making inclusive learning a reality through acoustic intelligence.

The Future Vision: Acoustic Intelligence as Cognitive Infrastructure

When audio systems evolve to understand scenarios, predict needs, shape experiences, and uphold ethical standards, they transcend the definition of standalone “devices” and become a critical component of spatial cognitive infrastructure. This evolutionary path is fully embodied and validated by AISpeech’s core technologies and product practices.

Built around the proprietary ClearSpeakAI algorithm, AISpeech has developed a complete intelligent audio ecosystem spanning underlying algorithms to end-user devices. Its Ceiling Microphone series and Matrix Microphones are the physical manifestation of this architecture: they are not passive sound-capturing tools, but cognitive nodes embedded into physical spaces. A single device delivers a 4.5–8 meter pickup radius and 25ms ultra-low latency, and cascaded deployment creates full-space, blind-spot-free sound field coverage. Beyond core functions like AI noise suppression, reverberation cancellation, and automatic gain control, the system intelligently perceives acoustic scenarios, makes autonomous pickup decisions, and anticipates user auditory needs to deliver seamless, natural sound amplification.

This transformation from ‘device’ to ‘infrastructure’ integrates acoustic intelligence with ethical priorities such as privacy protection and equitable coverage. This next-generation infrastructure enables deeper interpersonal connection, more efficient knowledge transfer, and more inclusive participation experiences for all users.

Looking ahead, smart buildings will adopt acoustic systems as one of their core nervous systems, working in tandem with visual and environmental control systems to create spaces that adapt perfectly to human cognitive traits. In this evolution, AI is more than an optimization tool—it is an explorer redefining ‘sound as a cognitive medium’. It reminds us that the ultimate goal of improving auditory experience, which is not to chase abstract technical parameters, but to expand the boundaries of human communication and understanding.

This revolution will not culminate in a slightly clearer conference system. It will redefine how humans perceive, understand, and connect with each other in physical and digital spaces. When technology truly understands the meaning of sound, sound itself will gain a new, profound purpose in human life.

About AISpeech

AISpeech is a leading conversational AI company in China. Founded in Cambridge (2007) and headquartered in Suzhou, the company develops its proprietary end-to-end dialogue platform, 1+N distributed agent system, and AI voice chips. By integrating LLMs with cloud–edge deployment and hardware–software integration, AISpeech delivers advanced solutions across Smart Mobility, Smart Office, and AIoT. Supporting dozens of languages, AISpeech holds over 1,700 intellectual property rights and has contributed to more than 70 industry standards, driving intelligent transformation for partners worldwide.